Lunatic is a VM and rust library that brings Elixir-like concurrency and fault tolerance to Rust.

Around 1.5 years ago I wrote a blog post about brining some popular ideas from Erlang and Elixir to Rust. It ended up on the front page of Hacker News and received a fair amount of traffic. I think that now is a good time to give a status update on the project and see what worked well and what didn’t.

Phase 1 - A concurrency model without memory sharing

Picking WebAssembly as the core abstraction layer had probably the biggest impact on the whole project. Lunatic’s concurrency model is unique in Rust’s ecosystem. Contrary to threads or async tasks, lunatic’s processes don’t share any memory. This is the core idea behind Elixir’s programming model and the “let it crash” philosophy.

The IT Crowd: “Have you tried turning it off and on again”

Long-running tasks have a tendency to get into a weird state that the developer couldn’t predict. Instead of trying to recover from all possible failure scenarios, most of the time the best course of action is to restart them. The fresh state is always more likely to be well tested, because it’s the one that the developer keeps testing all the time while writing the code. Write code, compile, run the app from a fresh state, and repeat. Elixir has already proven that this approach works great in practice.

The AXD301 has achieved a NINE nines reliability (yes, you read that right, 99.9999999%). Let’s put this in context: 5 nines is reckoned to be good (5.2 minutes of downtime/year). 7 nines almost unachievable … but we did 9.

Why is this? No shared state, plus a sophisticated error recovery model.

- Joe Armstrong (co-designer of the Erlang programming language)

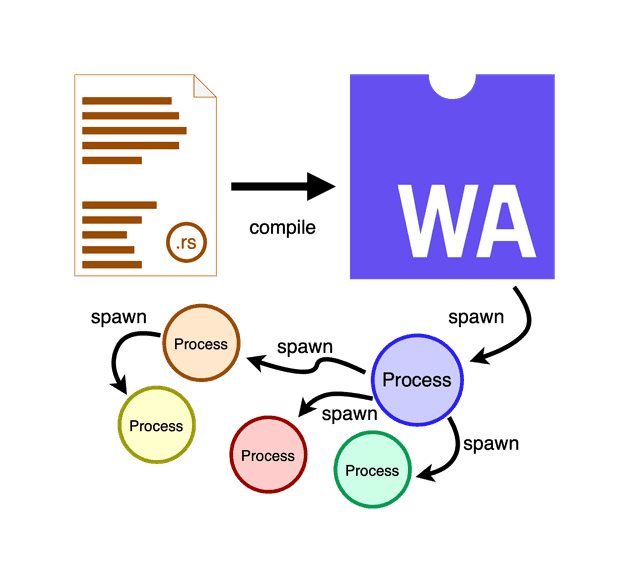

To guarantee that one process can only mess up its own state, lunatic gives each process their own memory space. This is true even if you link to a C library from Rust that uses globally shared state. Each lunatic process is a WebAssembly instance spawned from the same .wasm module. The architecture looks something like this:

From Rust source file to a process

The Rust project is compiled to a WebAssembly module, then a process (Wasm instance) is spawned from it. Each process holds a reference to the initial module and can spawn other sub-processes. WebAssembly instances are lightweight and fast to spawn, they are scheduled on a multithreaded async executor in user-space and have good performance characteristics. The only way for them to exchange data is through message passing.

WebAssembly gives us even more power here than Elixir. With Wasm we can limit

resource consumption on a per-process basis and spawn processes that can only use a limited

amount of memory or CPU. That way we can catch failures of individual processes starting to

consume too much memory, something that is not possible in Elixir (Erlang 19.0 added

a feature to set the max heap limit).

When I started using WebAssembly to solve this problem, it wasn’t well known or even popular outside the browser, but it is slowly becoming the way of shipping backend applications. The same feature that allows lunatic to spawn sub-process can also be used to load other .wasm modules during runtime and spawn processes from them. They don’t even need to be written in Rust. Lunatic can now be used as a general purpose WebAssembly runtime.

WebAssembly also provided us with other (unexpected) benefits. The lunatic VM ships with an async executor, TCP/UDP stacks, messaging capabilities and other features. This means that the actual WebAssembly module doesn’t contain any of this stuff and can just call into the VM for the functionality. This results in superfast compile times, there is just much less to do. I didn’t even bother with hiding some of lunatic’s features behind flags, because the compile time was never an issue.

Something else that we got for free in distributed lunatic is the ability to spawn processes on any kind of node, no matter the CPU architecture or operating system. Each WebAssembly module is transferred to the node and then JIT compiled for the specific architecture before execution.

Phase 2 - Dynamic types in Rust? Static types in Elixir?

This neat trick of using WebAssembly as a layer between rust and machine code is what made lunatic possible, and that breakthrough led me to write the original blog post. But, if you take a closer look at the Rust code you needed to write back then, you will realize that it doesn’t feel like writing Elixir.

The message passing and pattern matching works really well in Elixir because it’s a dynamically typed language. Once I tried to translate this patterns to Rust, I couldn’t figure out a way of fitting them into Rust’s strict type system. I had a look at what other rust message passing libraries do or how the messages are handled in Go, Gleam and others. This lead me to try a channel based approach. Each process can capture a set of channels from its parent that are used to receive messages of a specific type. This sounded like a reasonable approach at first, but once I tried it out in a bigger project I realized that it’s super easy to deadlock yourself while you wait on the wrong message type. It just didn’t feel right.

Solving this problem was my main focus over the last 18 months. That, and rewriting the whole VM from scratch for a more modular design, but this story is for another post. With the recent release of version 0.9, I finally arrived at a point where I feel like we found a great balance between Elixir’s patterns and Rust’s type safety. Check out the release notes for code examples.

With protocols, we added session-types to our library to guarantee message ordering and type

correctness during compile time. AbstractProcesses and Supervisors have a similar feel to

Elixir’s GenServers and Supervisors, but also bring type safety with them and the Rust compiler

will always remind us if we don’t handle a specific message type.

As we keep writing more Rust the Elixir way, we will be extending this concepts and probably inventing others. But at this point I feel like we managed to build the right foundation for a good developer experience, to the point that I now prefer writing lunatic Rust instead of async Rust.

Next phase - What still needs some work?

WebAssembly is the biggest reason for lunatic’s success, but it comes with its own issues. One big drawback is that not all rust crates compile to it. For example, if you want to use criterion to benchmark your Rust code inside lunatic, you will need to use a feature branch that is still not merged into master.

Almost none of the Rust async ecosystem compiles to WebAssembly, but I personally don’t consider this a big issue. I would like though to see more lunatic powered frameworks (db, web, …). Teymour Aldridge from our community has been building an experimenting web framework called puck with “LiveView” support. Now that we have a stable base library, I will be putting more effort into supporting lunatic’s library ecosystem.

If you enjoyed this blog post and like lunatic’s approach, join our discord community. We already have over 400 members 🎉.